The dictionary defines language as “the principal method of human communication consisting of words used in a structured and conventional way and conveyed by speech, writing, or gesture.” And “a system of communication used by a particular country or community.”

This still rings true if we consider how people can now use language as the creative compass to drive results using artificial intelligence platforms such as ChatGPT, Midjourney, DALL-E, Photoshop Generative Fill, Stable Diffusion, or others. The better and more precise the language entered when using these tools, the more chance that the end result will be satisfying and workable to the creator using the platform.

How Did We Get Here?

To those that suddenly woke up and these magical tools appeared, you could wonder how AI is so mainstream. In reality, building machines that could actually understand humans has been championed for quite a long time. It has been a difficult proposition. The reason is that if a machine could understand human language, by proxy, couldn’t a machine think like a human?

Language, therefore, has been front and center of the challenge all along.

In 1950, Alan Turing wrote a paper outlining the challenge, and researchers have dubbed the ability to have machine-made language indistinguishable from a human, “The Turing Test.” You could argue that we haven’t hit that mark yet, but we’re getting close.

Researchers have been working on this challenge since. In 2017, Google researchers published a breakthrough paper, which saw the invention of what’s known as a “transformer.” The innovation they discovered was to make language processing parallelized. This means that all of the tokens in a body of text are processed and analyzed simultaneously rather than in a sequence. The key to the AI mechanism working is known as “attention.” Attention pushes the AI model to consider the relationships between the words, consider what is most important and what it “should pay attention to” and use.

These days, researchers and developers are far more advanced with the AI tools they have created. It feels like thing are hurdling down the road at breakneck speed. New tools, apps, and versions are being launched continually.

In 1962, author Arthur C. Clarke wrote, “Any sufficiently advanced technology is indistinguishable from magic.” Boy, was he correct. Doesn’t it feel like magic?

Using Language For Creation

While there are plenty of skeptics, naysayers, and those who steadfastly refuse to use AI in anything they do, there is little doubt how AI is changing life as we know it on this planet. It is everywhere. And yet, it feels like we just getting started.

But, this article can’t be about everything. It is about how language can be used to create an image. And, in fact, the better the language used, the more fined-tuned the results will be.

For the example, let’s begin with some simple language. We have a project to complete, and it is for a business that sells workwear boots to mostly industrial and construction workers. They need a new logo.

In Midjourney, let’s use the prompt: a pair of work boots.

If you haven’t used Midjourney yet, it returns the results based on the prompt language and gives you a grid of four images. You can choose the one you like and begin working with it, or start over.

This is an amazing result. It was rendered in under one minute, and each of the four images is completely original. They don’t exist anywhere else on the planet.

Could you have imagined (no pun intended) that you would be able to receive this type of quality of an image with such a simple language prompt?

A pair of work boots.

But remember, we are creating a logo for a workwear shoe store. The result returned above would be an ok place to start, and we could refine the image from there.

However, if we use more refined language in the prompt, we can achieve this type of an image:

The language used was a little different. Prompts: New work boots, in the style of product photography, hedcut, black and white line drawing, flat white background, graphic, thick black outline.

Midjourney returned this image virtually instantly. It took about a minute to render. How long would it have taken you or an illustrator to draw the boots?

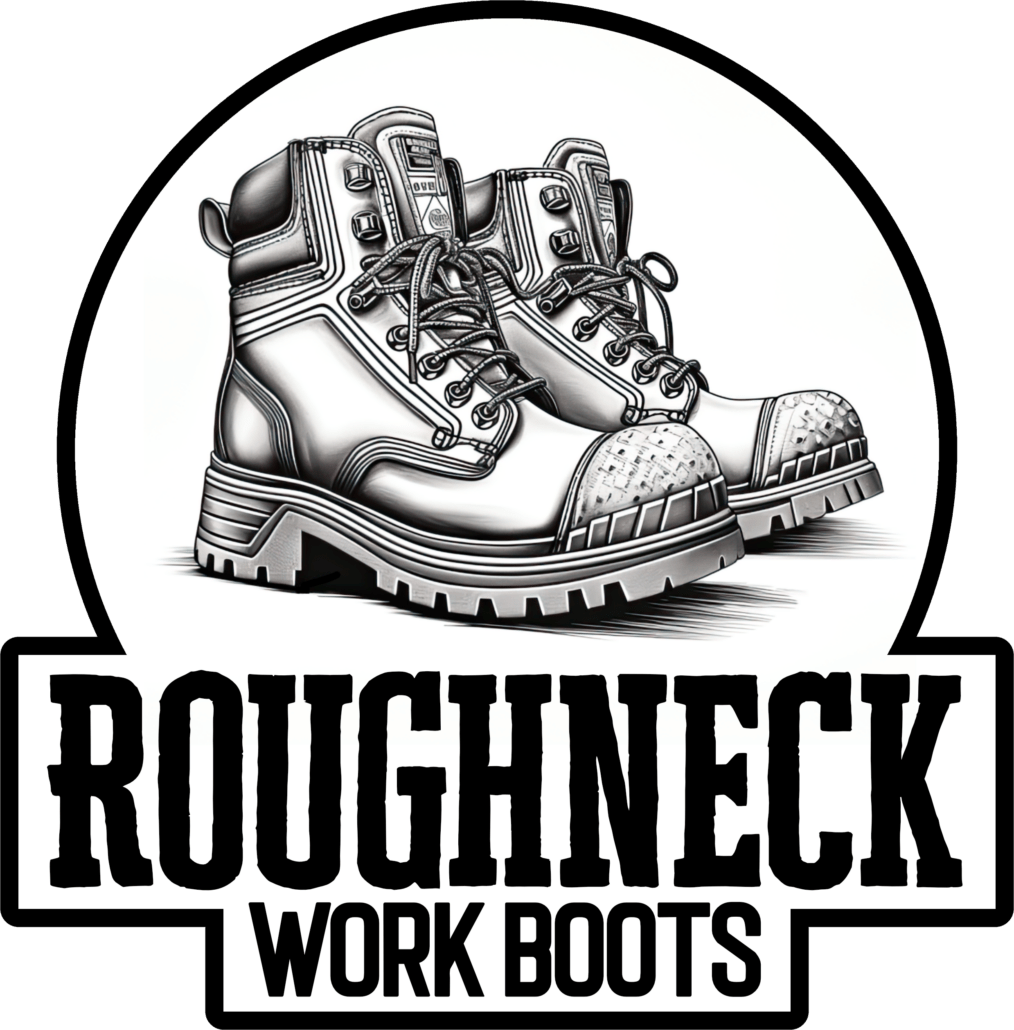

Are the boots in the four images in the grid perfect? No. We have some clean-up to do. If we choose one, upscale it, reroll it, and add some type, in about ten minutes of layout work, here’s what could be presented to the customer:

Specific Language Was Used

To fine-tune the image from just “work boots” to something that could for a graphic, more specific language was used to give Midjourney the instructions on what to imagine.

New Work Boots – Not banged up, dirty, or worn. Brand new. Clean and perfect.

In the style of product photography – Meaning, this should look like other work boots that have appeared in catalogs, websites, advertisements, and marketing. It needs to look the part for the logo. “In the style of” also alludes that we want the look, but NOT photography. Just the style.

Hedcut – this is the black and white line drawing style that was invented by The Wall Street Journal. It has a specific look and feel to it, and is perfect for line art illustrations.

Black and white line drawing – as we used photography earlier in the prompt, this is insurance that the image looks like a graphic, and not a photo.

Flat white background – A “scene” with the boots isn’t needed. The language here says to Midjourney that the object should be presented on a white area, and not to create anything else.

Graphic – Yes. The image should look like a graphic, as it is for a logo.

Thick black outline – Here, we are trying to influence Midjourney to use thicker lines, and be less delicate with the rendering.

The Genie is Out of the Bottle

While the doom and gloom folks are wringing their hands about AI, you need to be paying attention. People are worried that AI will take their jobs.

Let’s be clear. AI is NOT going to take your job. People using AI will.

The results are too good. It’s too fast. And frankly, the results are usually better than what the people complaining about it can muster. Could you have drawn the boots pictured above?

But what drives AI to work the way that you intend is all based on the language that you use. This is where the growth will happen. Are you experimenting and discovering what words give you the best results? You should.

Language indeed is the new paintbrush. What are you creating?

“The limits to my language means the limits to my world.” – Ludwig Wittgenstein

“Language exerts a hideen power, like the moon on the tides.” – Rita Mae Brown

“Of all of our inventions for mass communication, pictures still speak the most universally understood language.” – Walt Disney

Help Support This Blog

While I may be goofing around with AI for some projects, this blog and its contents have been created by me, Marshall Atkinson. I occasionally use AI tools such as Midjourney or OpenAI. Tools are meant to be used, and as a human being, I can control them.

Why am I writing this? To remind you, dear reader, these words are backed by a real person. With experience, flaws, successes, and failures… That’s where growth and learning happen. By putting in the work.

If you are reading this and it is not on my website, it has been stolen without my permission by some autobot. Please report this to me and/or publicly out the website that hijacked it. And if you are trying to copy and use it without my permission, you are stealing. Didn’t your mama teach you better?

If you like this blog and would like to support it, you can:

- Buy a book.

- Share this blog on your social media.

- Leave a comment! Engagement is fun.

- Join Shirt Lab Tribe.

- Subscribe to the Success Stories podcast.

- Watch and like an episode on the Jerzees Adventures in Apparel Decorating YouTube series.

- Get signed up for the new Production Tracker app.

- Subscribe to the Midjourney Elevating Print Creativity Newsletter

Also, my basic elevator pitch to you is that I help with “Clarifying effective change.” If you are dissatisfied with your business’s current results, maybe I can help.

Please schedule a discovery call here if you want to learn more.

Thanks!